Rational Unified Process(统一软件开发过程): Overview

The Rational Unified Process® or RUP(统一软件开发过程)® product is a software engineering process. It provides a disciplined approach to assigning tasks and responsibilities within a development organization. Its goal is to ensure the production of high-quality software that meets the needs of its end users within a predictable schedule and budget.

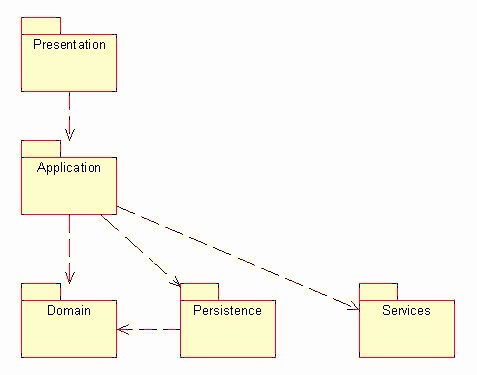

The preceding figure illustrates the overall architecture of the RUP, which has two dimensions:

- The horizontal axis represents time and shows the lifecycle aspects of the process as it unfolds. This first dimension illustrates the dynamic aspect of the process as it’s enacted and is expressed in terms of phases, iterations, and milestones.

- The vertical axis represents disciplines that logically group activities by nature. This second dimension portrays the static aspect of the process-how it’s described in terms of process components, disciplines, activities, workflows, artifacts, and roles (see Key Concepts).

The graph shows how the emphasis varies over time. For example, in early iterations you spend more time on requirements; in later iterations you spend more time on implementation.

About Rational Unified Process(统一软件开发过程)

Topics

Version information

© Copyright IBM Corp. 1987, 2004

All Rights Reserved

About this configuration

The Classic RUP(统一软件开发过程) template configuration recreates the RUP configuration that has been delivered over the years.

Classic RUP is useful as a published knowledge base that covers the gamut of RUP processes and practices. As such it is published and delivered with the RUP installation (either as a point product or in the suites). For Rational Suites users, it is the default location for Extended Help, context sensitive process guidance that is available from Rational tools’ Help menus. Very large or complex projects wishing to create RUP configurations for their purposes will find the Classic RUP configuration the most useful starting point for initial customized versions of the process. They will likely wish to harvest knowledge part way through these projects and use the Rational Process Workbench to create plug-ins that even more tightly align the process with their needs.

The following RUP plug-ins are included in this configuration:

- Formal Resources

- Informal Resources

The following process components are included as part of this configuration:

- Lifecycle

- Classic RUP

- Disciplines

- Process Components

- Tools

- Tool Mentors

- Rational Unified Process

- RUP Builder

- Rational Process Workbench

- Rational Administrator

- Rational Suite AnalystStudio

- Rational ClearCase

- Rational ClearQuest

- Rational ProjectConsole

- Rational PurifyPlus

- Rational QualityArchitect

- Rational RequisitePro

- Rational Robot

- Rational Rose

- Rational Rose RealTime

- Rational SoDA

- Rational TestManager

- Rational Test RealTime

- Rational TestFactory

- Rational XDE Developer(开发人员) - Java Platform Edition

- Rational XDE Developer - .NET Edition

- Tool Mentors

Legal statement

Browser support

Note 1: RUP does not currently support Netscape Navigator 6.x.

Note 2: Some versions of Microsoft Internet Explorer 4.x and Netscape Navigator 4.x may not be able to display all pages in RUP. To obtain the latest version of your browser, click on the appropriate icon.

Rational Unified Process(统一软件开发过程): Concepts Overview

The following is a summary of all concepts that are part of this RUP(统一软件开发过程) configuration.

- Developing Component(构件) Solutions

- Developing e-business Solutions

- Usability Engineering

- Tailoring a Process for a Small Project

- Agile Practices and RUP

- Disciplines

- Business Modeling

- Requirements

- Architecture

- Software Architecture

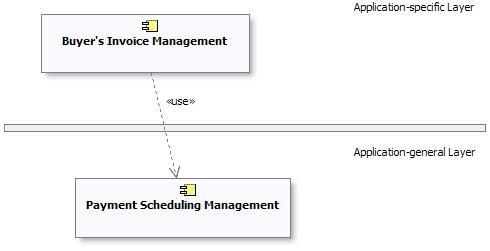

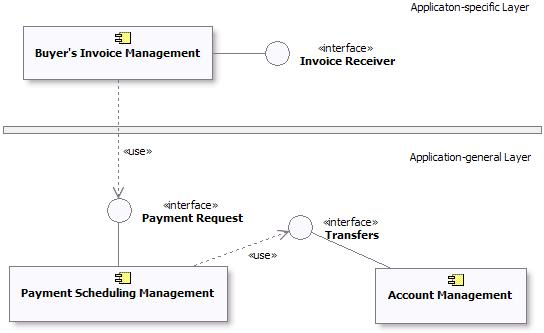

- Layering

- Prototypes

- Web Architecture(架构) Patterns

- Design and Implementation(实现) Mechanisms

- Concurrency

- Distribution Patterns

- Events and Signals

- Analysis Mechanisms

- Logical View

- Process View

- Implementation View

- Deployment View

- Component

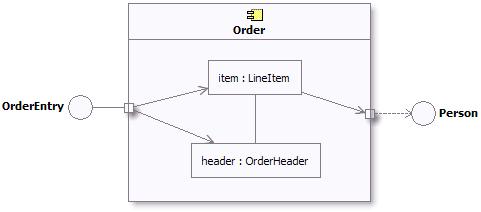

- Structured Class

- Representing Interfaces to External Systems

- Design

- Implementation

- Assessment

- Production

- Management

- Project Management

- Project Environment

- Configuration & Change Management

- Iteration

- Evaluating Quality

- Estimating Project Effort

- Risk

- Metrics

- Implementing a Process in a Project

- Agile Practices and RUP

- Mentoring

- The Underlying Model of the Rational Unified Process

- Pilot Project

- Effect of Implementing a Process

- RUP Tailoring

- Tailoring a Process for a Small Project

- Development Environment

- Process Quality

- Tools

Rational Unified Process(统一软件开发过程): Guidelines Overview

The following is a summary of all guidelines that are part of this RUP(统一软件开发过程) configuration.

- Installing and Customizing Microsoft Word Templates

- Installing and Customizing Microsoft Word Templates (Informal Set)

- Disciplines

- Business Modeling

- Business Use-Case Modeling

- Actor-Generalization in the Business Use-Case Model

- Business Use-Case Model

- Communicate-Association in the Business Use-Case Model

- Extend-Relationship in the Business Use-Case Model

- Include-Relationship in the Business Use-Case Model

- Use-Case Diagram in the Business Use-Case Model

- Use-Case-Generalization in the Business Use-Case Model

- Business Actor

- Business Use Case

- Activity Diagram in the Business Use-Case Model

- Business Analysis Modeling

- Business Analysis Model

- Diagrams in the Business Analysis Model

- Association in the Business Analysis Model

- Aggregation in the Business Analysis Model

- Generalization in the Business Analysis Model

- Going from Business Models to Systems

- Business Event

- Business Use-Case Realization

- Business Worker

- Business Entity

- Business System

- Business Architecture(架构) Document

- Assessment Workshop

- Brainstorming and Idea Reduction

- Pareto Diagrams

- Fishbone Diagrams

- Business Actor

- Business Use Case

- Business Goal

- Use-Case Diagram in the Business Use-Case Model

- Communicate-Association in the Business Use-Case Model

- Business Use-Case Model

- Include-Relationship in the Business Use-Case Model

- Extend-Relationship in the Business Use-Case Model

- Use-Case-Generalization in the Business Use-Case Model

- Business Architecture Document

- Going from Business Models to Systems

- Activity Diagram in the Business Use-Case Model

- Business Analysis Model

- Business Analysis Modeling Workshop

- Role Playing

- Aggregation in the Business Analysis Model

- Generalization in the Business Analysis Model

- Association in the Business Analysis Model

- Business Use-Case Realization

- Diagrams in the Business Analysis Model

- Business Worker

- Business Entity

- Business Vision

- Target-Organization Assessment

- Business Rules

- Business Use-Case Modeling

- Requirements

- Requirements w/ Use Cases

- Software Requirements(需求) Specification

- Supplementary Specifications - Informal Representation

- Use-Case Model

- Use-Case Diagram

- Communicate-Association

- Actor-Generalization

- Use-Case Generalization

- Include-Relationship

- Extend-Relationship

- Use-Case Package

- Use Case

- Activity Diagram in the Use-Case Model

- Actor

- Requirements Management

- Software Requirements Specification

- Brainstorming and Idea Reduction

- Storyboarding

- Use-Case Workshop

- Requirements Management Plan

- Important Decisions in Requirements

- Stakeholder Requests - Informal Representation

- Interviews

- Requirements Workshop

- Storyboard

- Requirements w/ Use Cases

- Architecture

- Concurrency

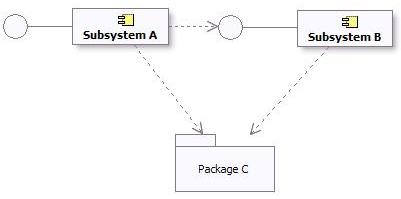

- Representing Interfaces to External Systems

- Reverse-engineering Relational Databases

- Layering

- Software Architecture Document

- Association

- Aggregation

- Generalization

- Import Dependency in Design

- Subscribe-Association

- Class Diagram

- Communication Diagram

- Sequence Diagram

- Statechart Diagram

- Design Model

- Design Class

- Testing and Evaluating Classes

- Building Web Applications with the UML

- Interface

- Design Package

- Design Subsystem

- Capsule

- Use-Case Realization

- Test Design

- Design

- Implementation

- Assessment

- Reviews

- Test

- Reviews

- Test Plan

- Iteration Assessment - Informal Representation

- Status Assessment - Informal Representation

- Review Levels

- Review Record - Informal Representation

- Production

- Management

- Project Management

- Project Environment

- Reviews

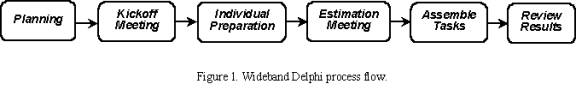

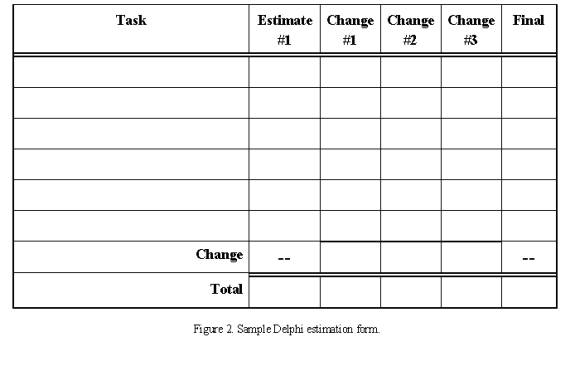

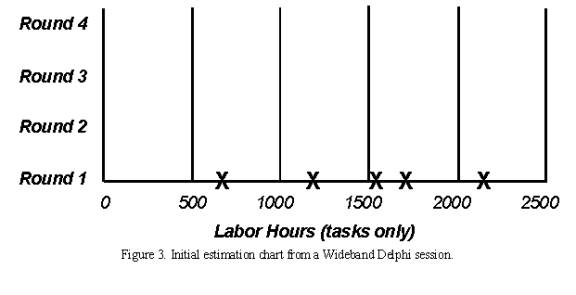

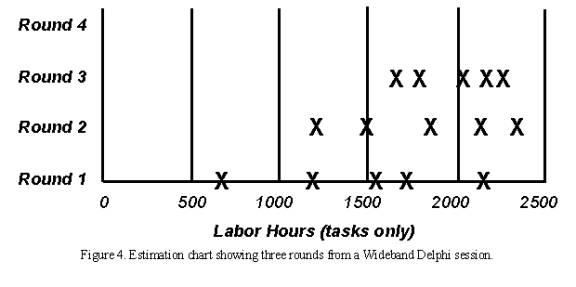

- Estimating Effort Using the Wide-Band Delphi Technique

- Process Tailoring Practices

- Process Discriminants

- Development Case

- Important Decisions in Analysis & Design

- Important Decisions in Business Modeling

- Important Decisions in Configuration & Change Management

- Important Decisions in Environment

- Important Decisions in Deployment

- Important Decisions in Implementation

- Important Decisions in Project Management

- Important Decisions in Requirements

- Important Decisions in Test

- Classifying Artifacts

- Development Case Workshop

- Iteration Plan

- Metrics

- Software Development Plan

- Risk List

- Risk List - Informal Representation

- Business Case

- Alternative Representations of Document Artifacts

- Review Levels

Glossary(术语表)(Test) - Rational Unified Process(统一软件开发过程)

A

- ABC

- ABM

- abstract

-

Of or relating to a subject in the abstract without practical purpose or intention. Not applied or practical; theoretical. Considered apart from concrete existence. Contrast: concrete. See: abstract class.

-

A concept or idea not associated with any specific instance. Synonym: abstraction.

-

A summary of the main points of an argument or theory. Synonyms: outline, synopsis.

- abstract class

-

A class that provides common behavior across a set of subclasses but is not itself designed to have instances. An abstract class represents a concept; classes derived from it represent implementations of the concept. See also: base class. Contrast: concrete class.

A class that provides common behavior across a set of subclasses but is not itself designed to have instances. An abstract class represents a concept; classes derived from it represent implementations of the concept. See also: base class. Contrast: concrete class. - abstraction

-

The creation of a view or model that suppresses unnecessary details to focus on a specific set of details of interest

-

The essential characteristics of an entity that distinguish it from all other kinds of entities. An abstraction defines a boundary relative to the perspective of the viewer.

The essential characteristics of an entity that distinguish it from all other kinds of entities. An abstraction defines a boundary relative to the perspective of the viewer. - acceptance

-

An action by which the customer accepts ownership of software products as a partial or complete performance of a contract.

- access modifier

-

A keyword that controls access to a class, method, or attribute. The access modifiers in Java are public, private, protected, and package, which is the default.

- accessor methods

-

Methods that an object provides to define the interface to its instance variables. The accessor method to return the value of an instance variable is called a get method or getter method, and the mutator method to assign a value to an instance variable is called a set method or setter method.

- ACL

-

Access control list.

- action

-

The specification of an executable statement that forms an abstraction of a computational procedure. An action typically results in a change in the state of the system, and can be realized by sending a message to an object or modifying a link or a value of an attribute.

The specification of an executable statement that forms an abstraction of a computational procedure. An action typically results in a change in the state of the system, and can be realized by sending a message to an object or modifying a link or a value of an attribute. - action sequence

- action state

-

A state that represents the execution of an atomic action, typically the invocation of an operation.

A state that represents the execution of an atomic action, typically the invocation of an operation. - activation

-

The execution of an action.

The execution of an action. - active class

-

A class representing a thread of control in the system.

-

A class whose instances are active objects. See: active object.

A class whose instances are active objects. See: active object. - active object

-

An object that owns a thread and can initiate control activity. An instance of active class.

An object that owns a thread and can initiate control activity. An instance of active class. - Active Server Page (ASP)

-

Active Server Page (Microsoft(R)), a technology mechanism for providing dynamic behavior to web applications.

- activity

-

A unit of work a role may be asked to perform.

- Activity(活动)-Based Costing (ABC)

-

A methodology that measures the cost and performance of activities, resources, and cost objects. Resources are assigned to activities, then activities are assigned to cost objects based on their use. Activity based costing recognizes the causal relationships of cost drivers to activities.

- Activity-Based Management (ABM)

-

The broad discipline that focuses on achieving customer value and company profit by way of the management of activities. It draws on activity-based costing as a major source of information.

- activity graph

-

A special case of a state machine that is used to model processes involving one or more classifiers. Contrast: statechart diagram . Synonym: activity diagram.

A special case of a state machine that is used to model processes involving one or more classifiers. Contrast: statechart diagram . Synonym: activity diagram. - actor (class)

-

Defines a set of actor instances, in which each actor instance plays the same role in relation to the system.

-

A coherent set of roles that users of use cases play when interacting with these use cases. An actor has one role for each use case with which it communicates.

A coherent set of roles that users of use cases play when interacting with these use cases. An actor has one role for each use case with which it communicates. - actor (instance)

-

Someone or something, outside the system that interacts with the system.

- actor generalization

-

An actor generalization from an actor class (descendant) to another actor class (ancestor) indicates that the descendant inherits the role the ancestor can play in a use case.

- actual parameter

-

Synonym: argument.

Synonym: argument. - Advanced Program-to-Program Communication (APPC)

-

A communication protocol used primarily in IBM environments.

- aggregate (class)

-

A class that represents the “whole” in an aggregation (whole-part) relationship. See: aggregation.

A class that represents the “whole” in an aggregation (whole-part) relationship. See: aggregation. - aggregation

-

An association that models a whole-part relationship between an aggregate (the whole) and its parts.

-

A special form of association that specifies a whole-part relationship between the aggregate (whole) and a component part. See: composition.

A special form of association that specifies a whole-part relationship between the aggregate (whole) and a component part. See: composition. - American Standard Code for Information Interchange (ASCII)

-

American standard code for information interchange. The 8-bit character encoding scheme used by most PCs and UNIX systems. It supersedes an earlier 7-bit ASCII standard.

- analysis

-

The part of the software development process whose primary purpose is to formulate a model of the problem domain. Analysis focuses on what to do; design focuses on how to do it. See: design.

- analysis & design

-

(general) activities during which strategic and tactical decisions are made to meet the required functional and quality requirements of a system. See also: Design Model.

-

A discipline in the Unified Process, whose purpose is to show how the system’s *use case*s will be realized in implementation.

- analysis class

-

An abstraction of a role played by a design element in the system, typically within the context of a use-case realization. Analysis classes may provide an abstraction for several roles, representing the common behavior of those roles. Analysis classes typically evolve into one or more design elements; for example, design *class*es and/or *capsule*s, or design *subsystem*s.

- analysis mechanism

-

An architectural mechanism used early in the design process, during the period of discovery when key classes and subsystems are being identified. Typically analysis mechanisms capture the key aspects of a solution in a way that is implementation independent. Analysis mechanisms are usually unrelated to the problem domain, but instead are “computer science” concepts. They provide specific behaviors to a domain-related class or component, or correspond to the implementation of cooperation between classes and/or components. They may be implemented as a framework. Examples include mechanisms to handle persistence, inter-process communication, error or fault handling, notification, and messaging, to name a few.

- analysis model

-

An object model that serves as an abstraction of the design model; provides the initial definition of the realization of the use cases.

- analysis pattern

-

[FOW97a] speaks of analysis patterns as,

“[…] groups of concepts that represent a common construction in business modeling. It may be relevant to only one domain, or it may span many domains.”

Therefore, in this reference, the vocabulary of the domain does intrude into the description of the pattern. There is no reason why the definition in [FOW97a] should not be extended to domains other than business modeling. Another aspect of an analysis pattern is that it is an abstract, conceptual template, intended (through binding as with any pattern) for instantiation in an analysis model, which will then need further refinement through design. The scale of an analysis pattern can vary widely, though those presented in [FOW97a] are medium in scale, and would compose to form analysis models for entire applications.

- analysis time

-

Refers to something that occurs during an analysis phase of the software development process. See: design time, modeling time.

Refers to something that occurs during an analysis phase of the software development process. See: design time, modeling time. - analyst

-

Member of the project team who is responsible for eliciting and interpreting the stakeholder needs, and communicating those needs to the entire team.

- API

- APPC

- applet

-

A Java program designed to run within a Web browser. Contrast: application.

- application

-

An act of putting to use (new techniques): an act of applying techniques.

-

Function and industry-relevant software that is determined by a particular business (for example, banking, aerospace, stock brokerage, insurance, accounting, inventory).

-

In Java programming, a self-contained, stand-alone Java program that includes main() method. Contrast: applet.

- Application Programming Interface(接口) (API)

-

A software interface that enables applications to communicate with each other. An API is the set of programming language constructs or statements that can be coded in an application program to obtain the specific functions and services provided by an underlying operating system or service program.

- architectural baseline

-

The baseline at the end of the Elaboration phase, at which time the foundation structure and behavior of the system is stabilized.

- architectural mechanism

-

Architectural mechanisms represent common concrete solutions to frequently encountered problems. They may be patterns of structure, patterns of behavior, or both. In the Rational Unified Process (RUP), architectural mechanism is used as an umbrella term for analysis mechanism, design mechanism, and implementation mechanism.

- architectural pattern

-

[BUS96] defines an architectural pattern as:

“An architectural pattern expresses a fundamental structural organization schema for software systems. It provides a set of predefined subsystems, specifies their responsibilities, and includes rules and guidelines for organizing the relationships between them.”

This is the interpretation we use in the RUP(统一软件开发过程). To elaborate a little: an architectural pattern is a pattern (that is, a solution template) at a particular scale, and is a template for concrete software architectures. It deals in system-wide properties and, typically, subsystem-scale (not class level) relationships. Architectural patterns seem, by their nature, not to be application domain dependent-the vocabulary of a particular domain seems not to intrude into the description of the pattern-although there is no reason in principle why architectural patterns cannot become specialized in this way. Compare with analysis pattern. The Software Architecture(软件架构) Document(软件架构文档) will present the architectural patterns used in the system.

- architectural view

-

A view of the system architecture from a given perspective. Focuses primarily on structure, modularity, essential components, and the main control flows.

- architecture

-

The highest level concept of a system in its environment, according to IEEE. The architecture of a software system (at a given point in time) is its organization or structure of significant components interacting through *interface*s, those components being composed of successively smaller components and interfaces.

-

The organizational structure of a system. An architecture can be recursively decomposed into parts that interact through interfaces, relationships that connect parts, and constraints for assembling parts. Parts that interact through interfaces include classes, components and subsystems.

The organizational structure of a system. An architecture can be recursively decomposed into parts that interact through interfaces, relationships that connect parts, and constraints for assembling parts. Parts that interact through interfaces include classes, components and subsystems. - architecture, executable

-

See: executable architecture.

- argument

-

A binding for a parameter that resolves to a run-time instance. Synonym: actual parameter. Contrast: parameter.

A binding for a parameter that resolves to a run-time instance. Synonym: actual parameter. Contrast: parameter. -

A data element, or value, included as a parameter in a method call. Arguments provide additional information that the called method can use to perform the requested operation.

- artifact

-

(1) A piece of information that: 1) is produced, modified, or used by a process, 2) defines an area of responsibility, and 3) is subject to version control. An artifact can be a model, a model element, or a document. A document can enclose other documents.

-

A physical piece of information that is used or produced by a software development process. Examples of Artifacts include models, source files, scripts, and binary executable files. An artifact may constitute the implementation of a deployable component. Synonym: product. Contrast: component.

A physical piece of information that is used or produced by a software development process. Examples of Artifacts include models, source files, scripts, and binary executable files. An artifact may constitute the implementation of a deployable component. Synonym: product. Contrast: component. - artifact guidelines

-

A description of how to work with a particular artifact, including how to create and revise the artifact.

- artifact set

-

A set of related artifacts which help to present one aspect of the system. Artifact(工件) sets cut across disciplines, as several artifacts are used in a number of disciplines; for example, the Risk(风险) List(风险列表), the Software Architecture(架构) Document, and the Iteration(迭代) Plan(迭代计划).

- ASCII

- ASP

-

See: active server page

- assertion

-

A logical expression specifying a program state that must exist or a set of conditions that program variables must satisfy at a particular point during program execution.

- association

-

A relationship that models a bi-directional semantic connection among instances.

-

The semantic relationship between two or more classifiers that specifies connections among their instances.

The semantic relationship between two or more classifiers that specifies connections among their instances. - association class

-

A model element that has both association and class properties. An association class can be seen as an association that also has class properties, or as a class that also has association properties.

A model element that has both association and class properties. An association class can be seen as an association that also has class properties, or as a class that also has association properties. - association end

-

The endpoint of an association, which connects the association to a classifier.

The endpoint of an association, which connects the association to a classifier. - asynchronous action

-

A request where the sending object does not pause to wait for results. Contrast: synchronous action.

A request where the sending object does not pause to wait for results. Contrast: synchronous action. - attack

-

A planned and methodical attempt to break or otherwise circumvent the normal operation of a running computer software program. Often malicious in nature, the concept of attacking computer software originated in the software hacker (A.K.A cracker) community whose members use various techniques to attack software systems, typically to circumvent security software and gain illegal entry to a host system. Examples of recognized attack techniques include buffer overflow, denial of service, resource constraint and Trojan horse. This term has subsequently been adopted by computer software testing professionals in discussing the methods by which they might expose potential bugs in a software system.

- attribute

-

An attribute defined by a class represents a named property of the class or its objects. An attribute has a type that defines the type of its instances.

-

A feature within a classifier that describes a range of values that instances of the classifier may hold.

A feature within a classifier that describes a range of values that instances of the classifier may hold.

B

- base class

- A class from which other classes or beans are derived. A base class may itself be derived from another base class. See: abstract class.

- baseline

- A reviewed and approved release of artifacts that constitutes an agreed basis for further evolution or development and that can be changed only through a formal procedure, such as change management and configuration control .

- BASIC

- Beginner’s all-purpose symbolic instruction code*,* a programming language. See: VB .

- bean

- A small component that can be used to build applications. See: JavaBean .

- beaninfo

- A companion class for a bean that defines a set of methods that can be accessed to retrieve information on the bean’s properties, events, and methods.

- behavior

The observable effects of an operation or event, including its results.

The observable effects of an operation or event, including its results.- behavioral feature

A dynamic feature of a model element , such as an operation or method .

A dynamic feature of a model element , such as an operation or method .- behavioral model aspect

A model aspect that emphasizes the behavior of the instances in a system, including their methods , collaborations , and state histories.

A model aspect that emphasizes the behavior of the instances in a system, including their methods , collaborations , and state histories.- beta testing

- Pre-release testing in which a sampling of the intended customer base tries out the product.

- binary association

An association between two classes . A special case of an n-ary association .

An association between two classes . A special case of an n-ary association .- binding

The creation of a model element from a template by supplying arguments for the parameters of the template.

The creation of a model element from a template by supplying arguments for the parameters of the template.- boolean

An enumeration whose values are true and false.

An enumeration whose values are true and false.- boolean expression

An expression that evaluates to a boolean value.

An expression that evaluates to a boolean value.- boundary class

- A class used to model communication between the system’s environments and its inner workings.

- break point

- A point in a computer program where the execution will be halted.

- build

- An operational version of a system or part of a system that demonstrates a subset of the capabilities to be provided in the final product.

- business actor (class)

- Defines a set of business-actor instances, in which each business-actor instance plays the same role in relation to the business.

- business actor (instance)

- Someone or something, outside the business that interacts with the business.

- business analysis model

- An object model describing the realization of business use cases . Synonym: business object model.

- business architecture

- Business architecture is an organized set of elements with clear relationships to one another, which together form a whole defined by its functionality. The elements represent the organizational and behavioral structure of a business, and show abstractions of the key processes and structures of the business.

- business creation

- To perform business engineering where the goal is to create a new business process , a new line of business or a new organization.

- business engineering

- A set of techniques a company uses to design its business according to specific goals. Business engineering techniques can be used for both business reengineering, business improvement , and business creation .

- business entity

- A business entity represents a significant and persistent piece of information that is manipulated by business actors and business workers .

- business event

- A business event describes a significant occurrence in space and time, of importance to the business. Business events are used to signal between business processes and are usually associated with business entities .

- business goal

- A business goal is a requirement that must be satisfied by the business. Business goals describe the desired value of a particular measure at some future point in time and can therefore be used to plan and manage the activities of the business. Also see business objective .

- business improvement

- To perform business engineering where the work of change is local and does not span the entire business. It involves trimming costs and lead times and monitoring service and quality.

- business modeling

- Encompasses all modeling techniques you can use to visually model a business. These are a subset of the techniques you may use to perform business engineering .

- business objective

- The commonly-used term for high-level business goals . Because business objectives are usually abstract, they are difficult to measure and are therefore translated into more measurable lower-level business goals.

- business process

- A group of logically related activities that use the resources of the organization to provide defined results in support of the organization’s objectives. In the RUP, we define business processes using business use cases , which show the expected behavior of the business, and business use-case realizations , which show how that behavior is realized by business workers and business entities . See also: process .

- business process engineering

- See: business engineering .

- business reengineering

- To perform business engineering where the work of change includes taking a comprehensive view of the entire existing business and think through why you do what you do. You question all existing business processes and try to find completely new ways of reconstructing them to achieve radical improvements. Other names for this are business process reengineering (BPR) and process innovation.

- business rule

- A declaration of policy or condition that must be satisfied within the business. Business rules can be captured in models, in documents or in both.

- business strategy

- The business strategy defines the principles and goals for realizing the business idea. It consists of a collection of long-term business objectives that will ultimately result in the achievement of the business vision.

- business system

- A business system encapsulates a set of roles and resources that together fulfill a specific purpose, and defines a set of responsibilities with which that purpose can be achieved.

- business use-case (class)

- A business use case defines a set of business use-case instances, where each instance is a sequence of actions a business performs that yields an observable result of value to a particular business actor. A business use-case class contains all main, alternate workflows related to producing the “observable result of value”.

- business use-case (instance)

- A sequence of actions performed by a business that yields an observable result of value to a particular business actor.

- business use-case model

- A model of the business intended functions. The business use-case model is used as an essential input to identify roles and deliverables in the organization.

- business use-case package

- A business use-case package is a collection of business use cases, business actors, relationships, diagrams, and other packages; it is used to structure the business use-case model by dividing it into smaller parts.

- business use-case realization

- A business use-case realization describes how the workflow of a particular business use case is realized within the business analysis model, in terms of collaborating business objects.

- business worker

- A business worker represents a role or set of roles in the business. A business worker interacts with other business workers and manipulates business entities while participating in business use-case realizations .

C

- call

An action state that invokes an operation on a classifier .

An action state that invokes an operation on a classifier .- call-level interface (CLI)

- A callable API for database access, which is an alternative to an embedded SQL application program interface. In contrast to embedded SQL, CLI does not require precompiling or binding by the user, but instead provides a standard set of functions to process SQL statements and related services at run time.

- capsule

- A specific design pattern which represents an encapsulated thread of control in the system. A capsule is a stereotyped class with a specific set of required and restricted associations and properties .

- capsule role

- Capsule roles represent a specification of the type of capsule that can occupy a particular position in a capsule’s collaboration or structure. Capsule roles are strongly owned by the container capsule and cannot exist independently of it. A capsule’s structural decomposition usually includes a network of collaborating capsule roles joined by connectors.

- cardinality

The number of elements in a set. Contrast: multiplicity .

The number of elements in a set. Contrast: multiplicity .- CBD

- See: component-based development

- CCB

- See: change control board

- CDR

- See: critical design review

- CGI

- See: common gateway interface

- change control board (CCB)

- The role of the CCB is to provide a central control mechanism to ensure that every change request is properly considered, authorized and coordinated.

- change management

- The activity of controlling and tracking changes to artifacts . See also: scope management .

- Change Request(变更请求) (CR)

- A general term for any request from a stakeholder to change an artifact or process . Documented in the Change Request is information on the origin and impact of the current problem, the proposed solution, and its cost. See also: enhancement request, defect.

- checkpoints

- A set of conditions that well-formed artifacts of a particular type should exhibit. May also be stated in the form of questions which should be answered in the affirmative.

- child

In a generalization relationship, the specialization of another element, the parent. See: subclass, subtype. Contrast: parent.

In a generalization relationship, the specialization of another element, the parent. See: subclass, subtype. Contrast: parent.- class

A description of a set of objects that share the same attributes, operations, methods, relationships, and semantics. A class may use a set of interfaces to specify collections of operations it provides to its environment. See: interface.

A description of a set of objects that share the same attributes, operations, methods, relationships, and semantics. A class may use a set of interfaces to specify collections of operations it provides to its environment. See: interface.- class diagram

A diagram that shows a collection of declarative (static) model elements, such as classes, types, and their contents and relationships.

A diagram that shows a collection of declarative (static) model elements, such as classes, types, and their contents and relationships.- class hierarchy

- The relationships among classes that share a single inheritance. All Java classes inherit from the Object class.

- classifier

A mechanism that describes behavioral and structural features. Classifiers include interfaces, classes, datatypes, and components.

A mechanism that describes behavioral and structural features. Classifiers include interfaces, classes, datatypes, and components.- class library

- A collection of classes.

- class method

- See: method.

- CLI

- See: call-level interface.

- client

A classifier that requests a service from another classifier. Contrast: supplier.

A classifier that requests a service from another classifier. Contrast: supplier.- client/server

- The model of interaction in distributed data processing where a program at one location sends a request to a program at another location and awaits a response. The requesting program is called a client, and the answering program is called a server.

- CM

- See: configuration management .

- COBOL

- Common Business Oriented Language

- cohesion

- The congenital union of components of the same kind that depend on one another. The act or state of sticking together; close union. Contrast: coupling.

- collaboration

- (1) Is a description of a collection of objects that interact to implement some behavior within a context. It describes a society of cooperating objects assembled to carry out some purpose.

- (2) It captures a more holistic view of behavior in the exchange of messages within a network of objects.

- (3) Collaborations show the unity of the three major structures underlying computation: data structure, control flow, and data flow.

- (4) A collaboration has a static and a dynamic part. The static part describes the roles that objects and links play in an instantiation of the collaboration. The dynamic part consists of one or more dynamic interactions that show message flow over time in the collaboration to perform computations. A collaboration may have a set of messages to describe its dynamic behavior.

- (5) A collaboration with messages is an interaction.

The specification of how an operation or classifier, such as a use case, is realized by a set of classifiers and associations playing specific roles used in a specific way. The collaboration defines an interaction. See: interaction.

The specification of how an operation or classifier, such as a use case, is realized by a set of classifiers and associations playing specific roles used in a specific way. The collaboration defines an interaction. See: interaction.- collaboration diagram

- This term was changed to communication diagram in UML 2.0.

- column

- An attribute of a table in a database.

- COM

- Component(构件) object model (Microsoft). A software architecture from DEC and Microsoft, allowing interoperation between ObjectBroker and OLE (Object linking and embedding). Microsoft later evolved COM into DCOM.

- comment

An annotation attached to an element or a collection of elements. A note has no semantics. Contrast: constraint.

An annotation attached to an element or a collection of elements. A note has no semantics. Contrast: constraint.- commit

- The operation that ends a unit of work to make permanent the changes it has made to resources (transaction or data).

- common gateway interface (CGI)

- A standard protocol through which a Web server can execute programs running on the server machine. CGI programs are executed in response to requests from Web client browsers.

- common object request broker architecture (CORBA)

- A middleware specification which defines a software bus-the Object Request Broker (ORB)-that provides the infrastructure.

- communicates-association

- An association between an actor class and a use case class, indicating that their instances interact. The direction of the association indicates the initiator of the communication (Unified Process convention).

- communication association

In a deployment diagram an association between nodes that implies a communication. See: deployment diagram.

In a deployment diagram an association between nodes that implies a communication. See: deployment diagram.- communication diagram

- (1) Formerly named collaboration diagram, a communication diagram describes a pattern of interaction among objects; it shows the objects participating in the interaction by their links to each other and the messages they send to each other.

- (2) It is a class diagram that contains classifier roles and association roles rather than just classifiers and associations.

- (3) Communication diagrams and sequence diagrams both show interactions, but they emphasize different aspects. Sequence diagrams show time sequences clearly but do not show object relationships explicitly. Communication diagrams show object relationships clearly, but time sequences must be obtained from sequence numbers.

A diagram that shows interactions organized around the structure of a model, using either classifiers and associations or instances and links. Unlike a sequence diagram, a communication diagram shows the relationships among the instances. Sequence diagrams and communication diagrams express similar information, but show it in different ways. See: sequence diagram.

A diagram that shows interactions organized around the structure of a model, using either classifiers and associations or instances and links. Unlike a sequence diagram, a communication diagram shows the relationships among the instances. Sequence diagrams and communication diagrams express similar information, but show it in different ways. See: sequence diagram.- compile time

Refers to something that occurs during the compilation of a software module. See: modeling time, run time.

Refers to something that occurs during the compilation of a software module. See: modeling time, run time.- component

- A non-trivial, nearly independent, and replaceable part of a system that fulfills a clear function in the context of a well-defined architecture. A component conforms to and provides the realization of a set of interfaces.

A modular, deployable, and replaceable part of a system that encapsulates implementation and exposes a set of interfaces. A component is typically specified by one or more classifiers (e.g., implementation classes) that reside on it, and may be implemented by one or more artifacts (e.g., binary, executable, or script files). Contrast: artifact.

A modular, deployable, and replaceable part of a system that encapsulates implementation and exposes a set of interfaces. A component is typically specified by one or more classifiers (e.g., implementation classes) that reside on it, and may be implemented by one or more artifacts (e.g., binary, executable, or script files). Contrast: artifact.- component-based development (CBD)

- The creation and deployment of software-intensive systems assembled from components as well as the development and harvesting of such components.

- component diagram

A diagram that shows the organizations and dependencies among components.

A diagram that shows the organizations and dependencies among components.- component model

- An architecture and an API that allows developers to define reusable segments of code that can be combined to create a program. VisualAge for Java uses the JavaBeans component model.

- composite [class]

A class that is related to one or more classes by a composition relationship. See: composition.

A class that is related to one or more classes by a composition relationship. See: composition.- composite aggregation

Synonym: composition.

Synonym: composition.- composite bean

- A bean that is composed of other beans. A composite bean can contain visual beans, nonvisual beans, or both. See also: bean.

- composite state

A state that consists of either concurrent (orthogonal) substates or sequential (disjoint) substates. See: substate.

A state that consists of either concurrent (orthogonal) substates or sequential (disjoint) substates. See: substate.- composite substate

A substate that can be held simultaneously with other substates contained in the same composite state. See: composite state. Synonym: region.

A substate that can be held simultaneously with other substates contained in the same composite state. See: composite state. Synonym: region.- composition

A form of aggregation association with strong ownership and coincident lifetime as part of the whole. Parts with non-fixed multiplicity may be created after the composite itself, but once created they live and die with it; that is, they share lifetimes. Such parts can also be explicitly removed before the death of the composite. Composition may be recursive. See also: composite aggregation.

A form of aggregation association with strong ownership and coincident lifetime as part of the whole. Parts with non-fixed multiplicity may be created after the composite itself, but once created they live and die with it; that is, they share lifetimes. Such parts can also be explicitly removed before the death of the composite. Composition may be recursive. See also: composite aggregation.- computation independent model (CIM)

- [OMG03] defines this so:

“A computation independent model is a view of a system from the computation independent viewpoint. A CIM does not show details of the structure of systems. A CIM is sometimes called a domain model and a vocabulary that is familiar to the practitioners of the domain in question is used in its specification.”

- concrete

- adj.Of or relating to an actual, specific thing or instance. Capable of being perceived by the senses; not abstract or imaginary. Contrast: abstract. See: concrete class.

- concrete class

A class that can be directly instantiated. Contrast: abstract class.

A class that can be directly instantiated. Contrast: abstract class.- concurrency

The occurrence of two or more activities during the same time interval. Concurrency(并发) can be achieved by interleaving or simultaneously executing two or more threads. See: thread.

The occurrence of two or more activities during the same time interval. Concurrency(并发) can be achieved by interleaving or simultaneously executing two or more threads. See: thread.- concurrent substate

A substate that can be held simultaneously with other substates contained in the same composite state. See: composite substate. Contrast: disjoint substate.

A substate that can be held simultaneously with other substates contained in the same composite state. See: composite substate. Contrast: disjoint substate.- configuration

-

- general: The arrangement of a system or network as defined by the nature, number, and chief characteristics of its functional units; applies to both hardware or software configuration.

- (2) The requirements, design, and implementation that define a particular version of a system or system component. See: configuration management.

- configuration item

- [ISO95] An entity in a configuration that satisfies an end-use function and can be uniquely identified at a given reference point.

- configuration management

- [ISO95] A supporting process whose purpose is to identify, define, and baseline items; control modifications and releases of these items; report and record status of the items and modification requests; ensure completeness, consistency and correctness of the items; and control storage, handling and delivery of the items.

- constraint

A semantic condition or restriction. Certain constraints are predefined in the UML, others may be user defined. Constraints are one of three extensibility mechanisms in UML. See: tagged value, stereotype.

A semantic condition or restriction. Certain constraints are predefined in the UML, others may be user defined. Constraints are one of three extensibility mechanisms in UML. See: tagged value, stereotype.- construction

- The third phase of the Unified Process, in which the software is brought from an executable architectural baseline to the point at which it is ready to be transitioned to the user community.

- constructor

- A special class method that has the same name as the class and is used to construct and possibly initialize objects of its class type.

- container

(1) An instance that exists to contain other instances, and that provides operations to access or iterate over its contents; for example, arrays, lists, sets.

(1) An instance that exists to contain other instances, and that provides operations to access or iterate over its contents; for example, arrays, lists, sets.- (2) A component that exists to contain other components.

- containment hierarchy

A namespace hierarchy consisting of model elements , and the containment relationships that exist between them. A containment hierarchy forms an acyclic graph.

A namespace hierarchy consisting of model elements , and the containment relationships that exist between them. A containment hierarchy forms an acyclic graph.- context

A view of a set of related modeling elements for a particular purpose, such as specifying an operation .

A view of a set of related modeling elements for a particular purpose, such as specifying an operation .- control class

- A class used to model behavior specific to one, or to several use cases .

- conversational

- A communication model where two distributed applications exchange information by way of a conversation; typically one application starts (or allocates) the conversation, sends some data, and allows the other application to send some data. Both applications continue in turn until one decides to finish (or de-allocate). The conversational model is a synchronous form of communication.

- Small files that your Web browser creates at the request of Web sites you visit; the browser sends the contents of the file to the site upon subsequent visits.

- CORBA

- See: common object request broker architecture

- coupling

- The degree to which components depend on one another. There are two types of coupling, “tight” and “loose”. Loose coupling is desirable to support an extensible software architecture but tight coupling may be necessary for maximum performance. Coupling is increased when the data exchanged between components becomes larger or more complex. Contrast: cohesion.

- CR

- See: change request

- CRC

- Class-responsibility collaborators. This is a technique in object-oriented development, originally proposed by Ward Cunningham and Kent Beck, to help define what objects should do in the system (their responsibilities), and identify other objects (the collaborators) that are involved in fulfilling these responsibilities. The technique is described in [WIR90]. CRC cards are a way of capturing these results using ordinary index cards.

- critical design review (CDR)

- In the waterfall life cycle, the major review held when the detailed design is completed.

- CRUPIC STMPL

- This acronym represents categories that can be used both in the definition of product requirements and in the assessment of product quality. Broken into two parts, the first part represents operational categories - capability, reliability, usability, performance, installability, compatibility - and the second part represents developmental categories - supportability, testability, maintainability, portability, localizability. See also: FURPS+.

- customer

- A person or organization, internal or external to the producing organization, who takes financial responsibility for the system. In a large system this may not be the end user. The customer is the ultimate recipient of the developed product and its artifacts. See also: stakeholder.

- cycle

- Synonyms: lifecycle, development cycle . See also: test cycle .

D

- DASD

- database

-

(1) A collection of related data stored together with controlled redundancy according to a scheme to serve one or more applications.

-

(2) All data files stored in the system.

-

(3) A set of data stored together and managed by a database management system.

- database management system (DBMS)

-

A computer program that manages data by providing the services of centralized control, data independence, and complex physical structures for efficient access, integrity, recovery, concurrency control, privacy, and security.

- datatype

-

A descriptor of a set of values that lack identity and whose operations do not have side effects. Datatypes include primitive predefined types and user-definable types. Predefined types include numbers, string and time. User-definable types include enumerations.

A descriptor of a set of values that lack identity and whose operations do not have side effects. Datatypes include primitive predefined types and user-definable types. Predefined types include numbers, string and time. User-definable types include enumerations. - DBA

-

Database administrator

- DBCS

- DBMS

- DCE

- DCOM

-

Distributed component object model (Microsoft). Microsoft’s extension of their Component Object Model (COM) to support objects distributed across a network.

- deadlock

-

A condition in which two independent threads of control are blocked, each waiting for the other to take some action. Deadlock often arises from adding synchronization mechanisms to avoid race conditions .

- defect

-

An anomaly, or flaw, in a delivered work product. Examples include such things as omissions and imperfections found during early lifecycle phases and symptoms of faults contained in software sufficiently mature for test or operation. A defect can be any kind of issue you want tracked and resolved. See also: change request .

- defining model

-

The model on which a repository is based. Any number of repositories can have the same defining model.

The model on which a repository is based. Any number of repositories can have the same defining model. - delegation

-

The ability of an object to issue a message to another object in response to a message. Delegation can be used as an alternative to inheritance. Contrast: inheritance .

The ability of an object to issue a message to another object in response to a message. Delegation can be used as an alternative to inheritance. Contrast: inheritance . - deliverable

-

An output from a process that has a value, material or otherwise, to a customer or other stakeholder .

- de-marshal

-

To deconstruct an object so that it can be written as a stream of bytes. See also: flatten, serialize.

- demilitarized zone (DMZ)

-

This term is now commonly used in the industry to describe a sub-network, typically used for web servers that are protected by firewalls from both the external Internet and a company’s internal network.

- dependency

-

A relationship between two modeling elements , in which a change to one modeling element (the independent element) will affect the other modeling element (the dependent element).

A relationship between two modeling elements , in which a change to one modeling element (the independent element) will affect the other modeling element (the dependent element). - deployment

-

A discipline in the software-engineering process, whose purpose is to ensure a successful transition of the developed system to its users. Included are artifacts such as training materials and installation procedures.

- deployment diagram

-

A diagram that shows the configuration of run-time processing nodes and the components , processes , and objects that live on them. Components represent run-time manifestations of code units. See also: component diagram .

A diagram that shows the configuration of run-time processing nodes and the components , processes , and objects that live on them. Components represent run-time manifestations of code units. See also: component diagram . - deployment environment

-

A specific instance of a configuration of hardware and software established for the purpose of installing and running the developed software for its intended use. See also: test environment , environment .

- deployment unit

-

A set of objects or components that are allocated to a process or a processor as a group. A distribution unit can be represented by a run-time composite or an aggregate .

A set of objects or components that are allocated to a process or a processor as a group. A distribution unit can be represented by a run-time composite or an aggregate . - deployment view

-

An architectural view that describes one or several system configurations; the mapping of software components (tasks, modules) to the computing nodes in these configurations.

- derived element

-

A model element that can be computed from another element, but that is shown for clarity or that is included for design purposes even though it adds no semantic information.

A model element that can be computed from another element, but that is shown for clarity or that is included for design purposes even though it adds no semantic information. - deserialize

-

To construct an object from a de-marshaled state. See also: marshal , resurrect .

- design

-

The part of the software development process whose primary purpose is to decide how the system will be implemented. During design, strategic and tactical decisions are made to meet the required functional and quality requirements of a system. See: analysis .

The part of the software development process whose primary purpose is to decide how the system will be implemented. During design, strategic and tactical decisions are made to meet the required functional and quality requirements of a system. See: analysis . - design mechanism

-

An architectural mechanism used during the design process, during the period in which the details of the design are being worked out. They are related to associated analysis mechanisms, of which they are additional refinements, and they may bind one or more architectural and design patterns. There is not necessarily any difference in scale between the analysis mechanism and the design mechanism- it is thus possible to speak of a persistence mechanism at the analysis level and the design level and mean the same thing, but at a different level of refinement. A design mechanism assumes some details of the implementation environment, but it is not tied to a specific implementation (as is an implementation mechanism). For example, the analysis mechanism for inter-process communication may be refined by several design mechanisms for interprocess communication (IPC): shared memory, function-call-like IPC, semaphore-based IPC, and so on. Each design mechanism has certain strengths and weaknesses; the choice of a particular design mechanism is determined by the characteristics of the objects using the mechanism.

- design model

-

An object model describing the realization of use cases ; serves as an abstraction of the implementation model and its source code.

- design package

-

A collection of classes , relationships , use-case realizations , diagrams , and other packages , it is used to structure the design model by dividing it into smaller parts. It’s the logical analogue of the implementation subsystem .

- design pattern

-

[GAM94] defines a design pattern as:

“A design pattern provides a scheme for refining the subsystems or components of a software system, or the relationships between them. It describes a commonly-recurring structure of communicating components that solves a general design problem within a particular context.”

Design patterns are medium to small-scale patterns, smaller in scale than architectural patterns but typically independent of programming language. When a design pattern is bound, it will form a portion of a concrete design model (perhaps a portion of a design mechanism). Design patterns tend, because of their level, to be applicable across domains.

- design subsystem

-

A model element that represents a part of a system. The design subsystem encapsulates behavior by packaging other model elements (classes or other design subsystems) that provide its behavior.It also exposes a set of interfaces which define the behavior it can perform.

- design time

-

Refers to something that occurs during a design phase of the software development process. See: modeling time . Contrast: analysis time .

Refers to something that occurs during a design phase of the software development process. See: modeling time . Contrast: analysis time . - developer

-

A person responsible for developing the required functionality in accordance with project-adopted standards and procedures. This can include performing activities in any of the requirements, analysis & design, implementation, and test disciplines.

- development case

-

The software-engineering process used by the performing organization. It is developed as a configuration, or customization, of the Unified Process product, and adapted to the project’s needs.

- development cycle

-

Synonyms: lifecycle, cycle . See also: test cycle .

- development process

-

A set of partially ordered steps performed for a given purpose during software development, such as constructing models or implementing models.

A set of partially ordered steps performed for a given purpose during software development, such as constructing models or implementing models. - device

-

A type of node which provides supporting capabilities to a processor . Although it may be capable of running embedded programs (device drivers), it cannot execute general-purpose applications, but instead exists only to serve a processor running general-purpose applications.

- diagram

-

A graphical depiction of all or part of a model .

-

A graphical presentation of a collection of model elements , most often rendered as a connected graph of arcs (relationships) and vertices (other model elements). UML supports the following diagrams: class diagram , object diagram , use-case diagram , sequence diagram , communication diagram , statechart diagram , activity diagram , component diagram , and deployment diagram .

A graphical presentation of a collection of model elements , most often rendered as a connected graph of arcs (relationships) and vertices (other model elements). UML supports the following diagrams: class diagram , object diagram , use-case diagram , sequence diagram , communication diagram , statechart diagram , activity diagram , component diagram , and deployment diagram . - direct access storage device (DASD)

-

A device that allows storage to be directly accessed, such as a disk drive (as opposed to a tape drive, which is accessed sequentially).

- discipline

-

A discipline is a collection of related activities that are related to a major ‘area of concern’. The disciplines in RUP include: Business Modeling(业务建模), Requirements(需求), Analysis & Design(分析与设计), Implementation(实现), Test(测试), Deployment(部署), Configuration & Change Management(配置与变更管理), Project Management(项目管理), Environment.

- disjoint substate

-

A substate that cannot be held simultaneously with other substates contained in the same composite state. See: composite state . Contrast: concurrent substate .

A substate that cannot be held simultaneously with other substates contained in the same composite state. See: composite state . Contrast: concurrent substate . - distributed computing environment (DCE)

-

Distributed Computing Environment. Adopted by the computer industry as a de facto standard for distributed computing. DCE allows computers from a variety of vendors to communicate transparently and share resources such as computing power, files, printers, and other objects in the network.

- distributed processing

-

Distributed processing is an application or systems model in which function and data can be distributed across multiple computing resources connected on a LAN or WAN. See: client/server computing.

- DLL

- DMZ

-

See: de-militarized zone

- DNS

-

See: domain name server

- document

-

A document is a collection of information that is intended to be represented on paper, or in a medium using a paper metaphor. The paper metaphor includes the concept of pages, and it has either an implicit or explicit sequence of contents. The information is in text or two-dimensional pictures. Examples of paper metaphors are word processor documents, spreadsheets, schedules, Gantt charts, web-pages, or overhead slide presentations.

- document description

-

Describes the intended content of a particular document.

- document template

-

A concrete tool template, available for tools such as a Adobe(R) FrameMaker(R) or Microsoft(R) Word(R).

- domain

-

An area of knowledge or activity characterized by a family of related values.

-

An area of knowledge or activity characterized by a set of concepts and terminology understood by practitioners in that area.

An area of knowledge or activity characterized by a set of concepts and terminology understood by practitioners in that area. - domain (database)

-

A user defined data type that defines a valid range of values for a column of a table in a database.

- domain model

-

A domain model captures the most important types of objects in the context of the domain. The domain objects represent the entities that exist or events that transpire in the environment in which the system works. The domain model is a subset of the business analysis model.

- domain name server

-

A system for translating domain names such as www.software.ibm.com into numeric Internet protocol addresses such as 123.45.67.8.

- double-byte character set (DBCS)

-

A set of characters in which each character is represented by 2 bytes. Languages such as Japanese, Chinese, and Korean, which contain more symbols than can be represented by 256 code points, require double- byte character sets. Contrast: single-byte character set.

- dynamically linked library (DLL)

-

A file containing executable code and data bound to a program at run time rather than at link time (the final phase of compilation). This means that the same block of library code can be shared between several tasks rather than each task containing copies of the routines it uses. The C++ Access Builder generates beans and C++ wrappers that let your Java programs access C++ DLLs.

- dynamic classification

-

A semantic variation of generalization in which an object may change type or role . Contrast: static classification .

A semantic variation of generalization in which an object may change type or role . Contrast: static classification . - dynamic information

-

Information that is created at the time the user requests it. Dynamic information changes over time so that each time users view it, they see different content.

E

- earned value

- [MSP97] defines this as:

“A measure of the value of work performed so far. Earned value uses original estimates and progress-to-date to show whether the actual costs incurred are on budget and whether the tasks are ahead or behind the baseline plan.”

- e-business

- (1) the transaction of business over an electronic medium such as the Internet

- (2) a business that uses Internet technologies and network computing in their internal business processes (via intranets), their business relationships (via extranets), and the buying and selling of goods, services, and information (via electronic commerce).

- EJB

- See: enterprise javabean

- elaboration

- The second phase of the process where the product vision and its architecture are defined.

- element

An atomic constituent of a model .

An atomic constituent of a model .- encapsulation

- The hiding of a software object’s internal representation. The object provides an interface that queries and manipulates the data without exposing its underlying structure.

- enclosed document

- A document can be enclosed by another document to collect a set of documents into a whole; the enclosing document as well as the individual enclosures are regarded as separate artifacts .

- enhancement request

- A type of stakeholder request that specifies a new feature or functionality of the system. See also: change request.

- enterprise javabean (EJB)

- An EJB is a non-visual, remote object designed to run on a server and be invoked by clients. An EJB can be built out of multiple, non-visual JavaBeans. EJBs are intended to live on one machine and be invoked remotely from another machine. They are platform-independent. Once a bean is written, it can be used on any client or server platform that supports Java.

- entity class

- A class used to model information that has been stored by the system, and the associated behavior. A generic class, reused in many use cases , often with persistent characteristics. An entity class defines a set of entity objects, which participate in several use cases and typically survive those use cases.

- entry action

An action executed upon entering a state in a state machine regardless of the transition taken to reach that state.

An action executed upon entering a state in a state machine regardless of the transition taken to reach that state.- enumeration

A list of named values used as the range of a particular attribute type. For example, RGBColor = {red, green, blue}. Boolean is a predefined enumeration with values from the set {false, true}.

A list of named values used as the range of a particular attribute type. For example, RGBColor = {red, green, blue}. Boolean is a predefined enumeration with values from the set {false, true}.- environment

- (1) A discipline in the software-engineering process, whose purpose is to define and manage the environment in which the system is being developed. Includes process descriptions, configuration management, and development tools.

- (2) A specific instance of a configuration of hardware and software, established for the purpose of software development, software testing, or in which the final product is deployed. See also: test environment , deployment environment .

- equivalence class

- A classification of equivalent values for which a object is expected to behave similarly. This technique can be applied to help analyze the most significant tests to conduct when there are too many potential tests to conduct in the available time. Synonyms: equivelance partition, domain.

- ERP

- Enterprise Resource Planning

- evalution mission

- A brief, easy-to-remember statement that defines the essence of the work objectives for the test team for a given work schedule. Typically reconsidered per iteration, the evaluation mission provides focus to keep the team working productively to benefit the testing stakeholders. Some examples of mission statements include: find important problems fast, advise about perceived quality and verify to a specification.

- event

The specification of a significant occurrence that has a location in time and space. In the context of state diagrams , an event is an occurrence that can trigger a transition.

The specification of a significant occurrence that has a location in time and space. In the context of state diagrams , an event is an occurrence that can trigger a transition.- event-to-method connection

- A connection from an event generated by a bean to a method of a bean. When the connected event occurs, the method is executed.

- evolution

- The life of the software after its initial development cycle; any subsequent cycle, during which the product evolves.

- evolutionary

- An iterative development strategy that acknowledges that user needs are not fully understood and therefore requirements are refined in each succeeding iteration (elaboration phase).

- executable architecture

- An executable architecture is a partial implementation of the system, built to demonstrate selected system functions and properties, in particular those satisfying non-functional requirements. It is built during the elaboration phase to mitigate risks related to performance, throughput, capacity, reliability and other ‘ilities’, so that the complete functional capability of the system may be added in the construction phase on a solid foundation, without fear of breakage. It is the intention of the RUP that the executable architecture be built as an evolutionary prototype, with the intention of retaining what is found to work (and satisfies requirements), and making it part of the deliverable system.

- exit action

An action executed upon exiting a state in a state machine regardless of the transition taken to exit that state.

An action executed upon exiting a state in a state machine regardless of the transition taken to exit that state.- exploratory testing

- A technique for testing computer software that requires minimal planning and tolerates limited documentation for the target-of-test in advance of test execution, relying on the skill and knowledge of the tester and feedback from test results to guide the ongoing test effort. Exploratory testing is often conducted in short sessions in which feedback gained from one session is used to dynamically plan subsequent sessions. For more detail, see: [BAC01a].

- export

In the context of packages, to make an element visible outside its enclosing namespace. See: visibility. Contrast: export[OMA], import.

In the context of packages, to make an element visible outside its enclosing namespace. See: visibility. Contrast: export[OMA], import.- expression

A string that evaluates to a value of a particular type. For example, the expression “(7 + 5 * 3)” evaluates to a value of type number.

A string that evaluates to a value of a particular type. For example, the expression “(7 + 5 * 3)” evaluates to a value of type number.- extend

A relationship from an extension use case to a base use case, specifying how the behavior defined for the extension use case can be inserted into the behavior defined for the base use case.